When it comes to web accessibility, testing your work is just as important as the build itself. Learn how to efficiently test for web accessibility using a checklist based on WCAG success criteria and how to effectively deploy your team to tackle the testing.

Let’s talk about web accessibility. Don’t stop reading, I’m not here to tell you about the importance of web accessibility—as an industry, we’re already quite aware of how important it is. I’m not even here to talk about what it takes to build accessibly. Instead, I want to talk about how you know you’ve built something accessible and how you test for it.

I could give you a generic checklist of things to test, but it doesn’t answer the question of who should be testing what—and that’s not sustainable, adaptable, or scalable. My team is surely going to be structured differently than yours, so I can’t tell you who should do what. I can, however, show you how to make your own checklist (way more useful) in three steps, using the Web Content Accessibility Guidelines (WCAG) as our guide:

Defining user roles

Creating a checklist

Delegating testing tasks

Step One: Defining Roles

Testing for web accessibility can be an intimidating and maybe even overwhelming task. There are so many things to check, and they might not always be relevant to one particular role. As a web developer, I don’t always have control over the content that goes on a page. For example, if a content editor adds a complex graph to the page, as an image, whose job is it to make sure the appropriate alt text or long description is created and put in place? Mine? The content editor? Both?

To get a better understanding of who should be responsible for what, you have to first understand how each member of your team contributes to the website. Start by listing out everyone’s roles. For the purposes of this article tutorial, we’ll go with: Content Creator, Designer, Developer, and QA Tester. Now, think about the main responsibilities of each role and how they interact with each other:

Content Creator

Responsible for the creation of all content on the site, including text, images, and video

Responsible for creating and maintaining the page hierarchy and structure of the content

Works primarily in the CMS backend to modify content and the navigation

Collaborates with the designer when new content will not work with existing templates and when additional visual media is required

May collaborate with the developer to understand the capabilities of the CMS and request new features

Designer

Responsible for the overall design of the website, adhering to brand guidelines

Collaborates with the content creator to design templates for new content types

Collaborates with the developer to translate static design mockups into an interactive user experience, including animation and interactive components

Developer

Maintains the codebase and all environments

Responsible for building out templates that are provided by the designer

Does not usually collaborate with the content creator, content and site structure are explained through the design

QA Tester

Reviews all new work in staging before it is released to production

New work may include new website features as well as new content added to the site

May collaborate with the designer to understand the expected user experience

May collaborate with the content creator to understand the site structure but not as concerned with the content itself

Now that we have a better understanding of the team and their responsibilities we can move on to creating the testing checklist.

Step Two: Testing Checklist

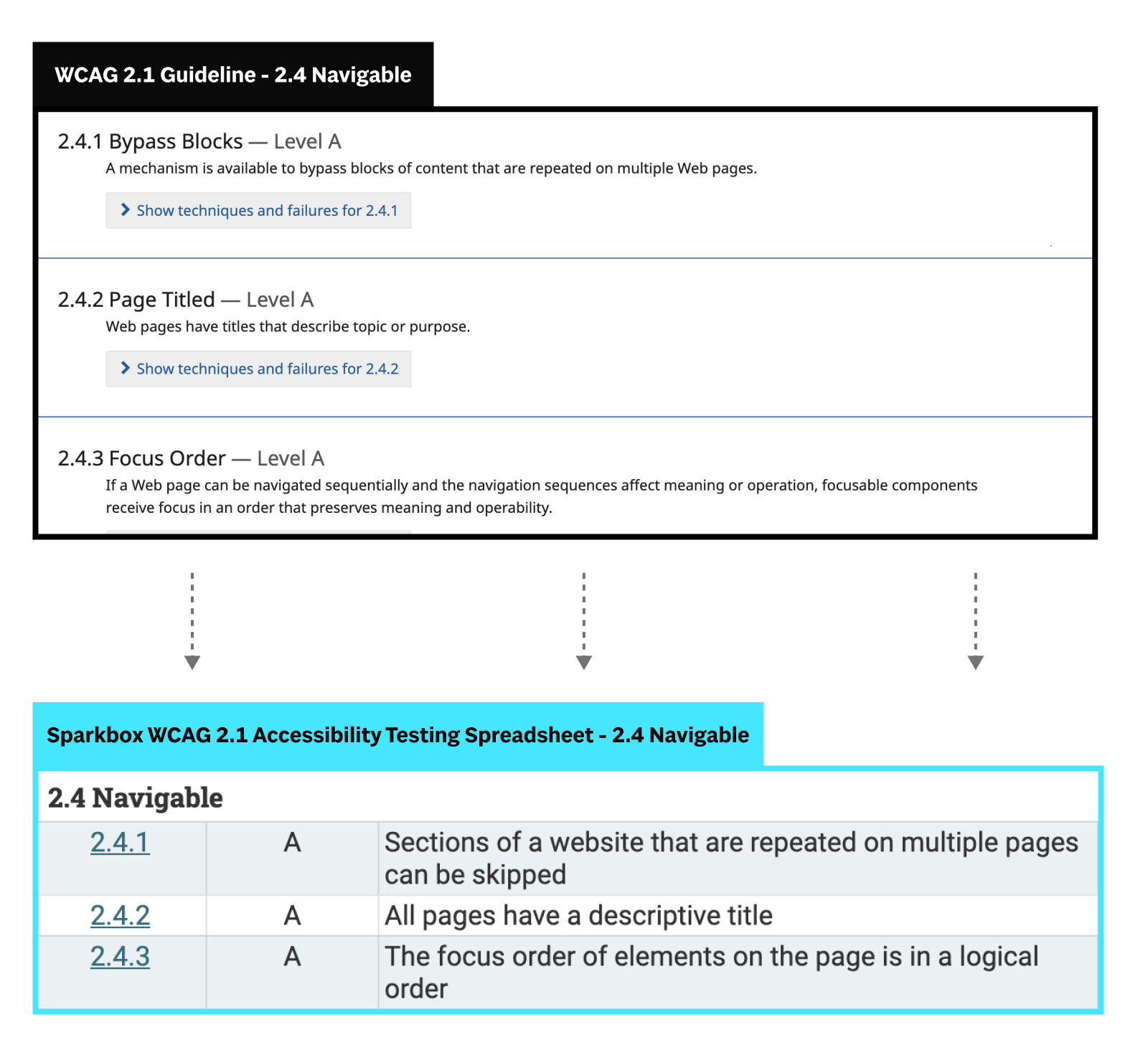

While I think that the WCAG 2.1 principles and guidelines (something I previously simplified into a 13-day study guide for my coworkers) provide a great overview of the different areas where accessibility is important, they aren’t a checklist. For instance, “Guideline 2.4 Navigable” explains that users should be able to navigate a site, find content, and determine where they are—the guideline by itself isn’t detailed enough to tell us what to test. That’s where success criteria come in.

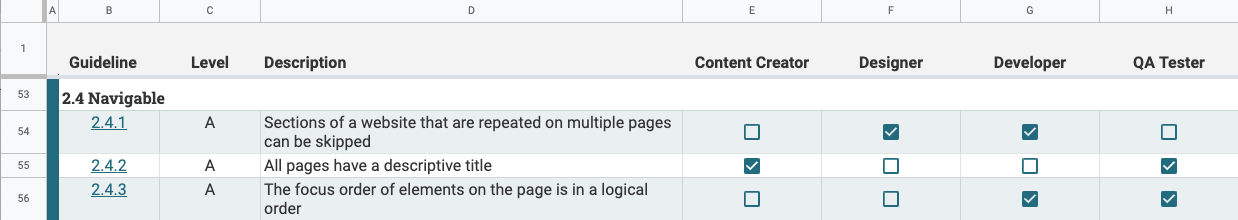

Guidelines are broken down into individual tasks or “success criteria” that identify ways in which we can meet requirements. From the 13 guidelines, there are 78 total success criteria that become the tasks on my checklist. Let’s revisit “Guideline 2.4 Navigable,” as an example:

And just like that, we have the beginnings of a checklist. Unfortunately, we cannot assign a single person all 78 success criteria and call it a day. WCAG wasn’t written for a single role. It touches every area in which a website can be made accessible, from the content and site structure to the design and development—this is why we advocate that everyone should care about accessibility.

It really takes a village to build an accessible website.

Our final step is to combine our checklist with the team roles and start delegating testing tasks.

Step Three: Delegating Tasks

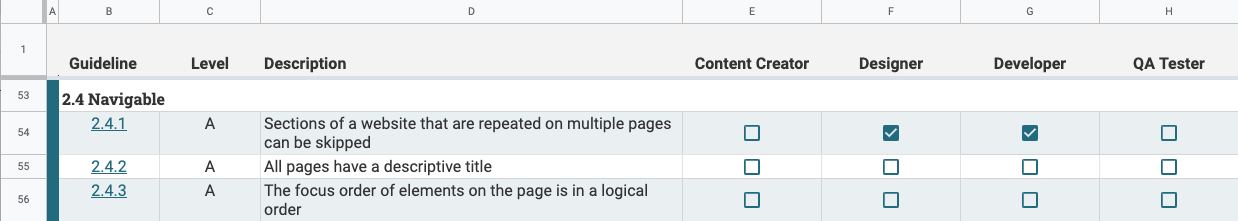

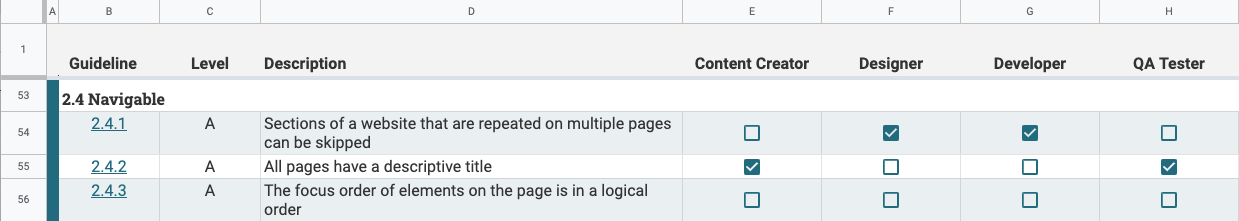

I like to plan out a testing checklist using a spreadsheet. Information and customized testing checklists can be compiled for individual roles, but for the planning phase, we’ll want to see all of the team roles and testing tasks together in one place to ensure that no criteria are missed.

Filling out the spreadsheet can be tedious but we have to go through each of the success criteria individually and compare them to the team roles to see which role should be responsible for testing that task.

Let’s go through some examples:

2.4.1 - Sections of a website that are repeated on multiple pages can be skipped.

This success criteria requires that there are skip links before any repeated content such as a page header or sidebar navigation. These links are visually hidden and then become visible when a keyboard user gives the element focus. Links are placed within page templates via code so it makes sense that the developer role should be responsible for ensuring they are in place. In our testing checklist, we can place a checkmark in the developer column for the 2.4.1 row. We can also place a checkmark in the designer column as they will need to include relevant styles for skip links as well as documenting which elements of the site will be repeated globally.

2.4.2 - All pages have a descriptive title.

On our team, designers and developers aren’t creating individual pages, they’re creating the templates from which individual pages will be dynamically created. As a result, the person responsible for creating the rest of the page content should ensure that each page has a unique and descriptive title. For 2.4.2, we’ll place a checkmark in the content creator column. Because new work is also reviewed by the QA tester, it makes sense for them to keep an eye out for descriptive page titles as well so we’ll add a checkmark for QA testers here also.

You could potentially add a checkmark under the developer column since they would be responsible for ensuring that the page title is correctly placed on the page. However, since the content creator and QA tester are regularly checking for page titles, you can skip assigning this task to developers for now. If you find that bugs around page titles are regularly popping up, you can reevaluate.

2.4.3 - The focus order of elements on the page is in a logical order.

When a keyboard user tabs through the contents of the site it can be confusing if the focus order jumps around the page. Typically, the only way to change the focus order of elements is within the code either by changing the tabindex attribute of the element or by using CSS to visually reposition elements. Naturally, this will fall under the developer role so place a checkmark there. Most content management systems allow for code to be added to pages within rich text editors so it is possible that a content editor could accidentally include content that changes the focus order of elements. But since creating this kind of content isn’t a normal part of their responsibilities, don’t put a checkmark in the content creator column. Instead, it should be a QA tester (who is responsible for reviewing all new content) that checks the focus order for new pages.

A few helpful things to consider as you’re compiling your checklist

Use the team role definitions to decide which role a task should be assigned to—if a role is directly responsible for implementing or updating that part of the website, it makes sense that they should be on the lookout for potential accessibility issues surrounding that task

It’s ok if multiple roles are assigned to test a task, this just means that extra eyes will be on the lookout for mistakes—the most important thing is that at least one role is assigned to each task

Reevaluate as your team changes or if there are still bugs repeatedly making their way into production

Conclusion

Once you work your way through all 78 success criteria, you’re done…with the planning portion. After you decide who is testing what, you have to incorporate accessibility testing into your workflow. The people in the different roles need to be informed of what they are expected to test and how often they should be testing. For instance, a rule of thumb for Sparkbox developers is that accessibility testing is done while new features are being built before they are reviewed and merged. It may take a little while to find the right cadence for accessibility testing on your team but the important thing is that you’re doing it.